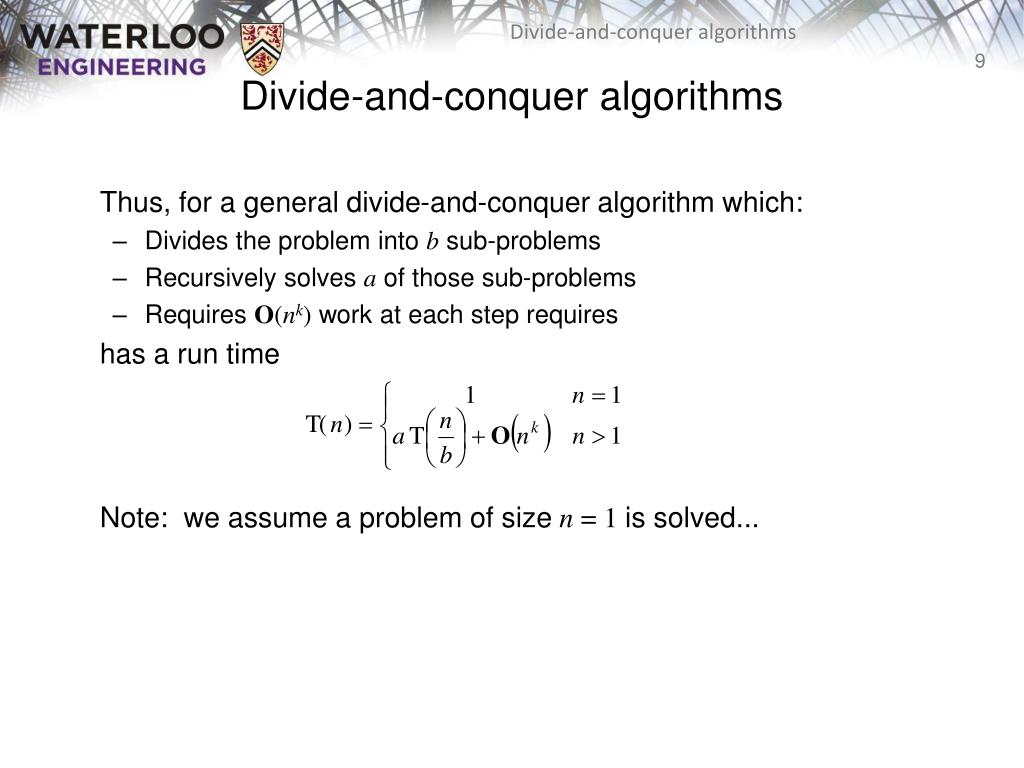

Here I am relying on random distribution and chance that the sizes will stay similar, but it is still going to be significantly better than the naive approach even for the worst case.Īs suggested by Evgeny Kluev in the comments, for a large number of small integers, there is going to be a lot of duplication, so efficient exponentiation is also better than naive multiplication. As the numbers get larger and more expensive to multiply, it would make sense to invest more time in iteratively grouping them by similar size. Note that this improvement does not rely on a set limit for the size of the integers in the array (100 chosen arbitrarily and to demonstrate the next algorithm), but only that they be similar in size. Naive multiplication accumulating linearly through the array Here's an example in Clojure Define a vector of 100K random integers between 2 and 100 inclusive With fast multiplication algorithms used to multiply large numbers (big-ints), it is much more efficient to multiply similar sized multiplicands than a series of mismatched sizes. It just needed more explanation to impress. Your divide and conquer suggestion was a good start. Assuming that bitmasks and bitshifts are faster than multiplies, this reduces the number of multiplications necessary by 33% (two instead of three here). Once you have ac and bd, you can directly multiply them together to get back the value of abcd. This division can be done with a bitshift, since 16U 4 is a power of two.Ĭonsequently, with one multiplication, you can get back the values of both ac and bd by applying a subsequent bitshift and bitmask. This means that if we divide the entire expression by 16U 4 and round down, we will end up getting back just ad. Since U 2 is a power of two, this can be done with a bitmask.ĤU 2ad + 4U 2bc + bd < 4U 4 + 4U 4 + U 2 < 9U 4 < 16U 4 This means that if we compute this product and take it mod U 2, we get back bd. (Since bd < U 2, we don't need to worry about the mod U 2 step messing it up). Now, notice that this expression mod U 2 is just bd. Start off by computing (4U 2a + b) × (4U 2c + d) = 16U 4ac + 4U 2ad + 4U 2bc + bd. Now, suppose that you want to multiply together a, b, c, and d. Word-Level Parallelism: Let's suppose that your numbers are all bounded from above by some number U, which happens to be a power of two. If you have multiple cores and can run parts of this in parallel, you could save a lot of time overall. Then, recursively multiply all n / b blocks back together. Divide-and-Conquer with Multithreading: Split the input apart into n different blocks of size b and recursively multiply all the numbers in each block together.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed